Vendors don't show you how they fail.

Agora does.

Vendor accuracy numbers are real — but only on clean audio. Agora runs your audio across the vendors you're evaluating and surfaces what they won't: which failure modes are silent, which are catchable, and which you can live with.

88% confidence. 37% wrong. AssemblyAI returned that on a Spanish-accent transcript — no flag, no alert. A standard confidence threshold would have let it through. That's not an accuracy problem. That's a silent failure problem.

Sub-500ms P95 utterance-to-transcript, validated at production call pace. Median 385ms, P95 470ms across 18 utterances at 1x realtime. Arabic-mixed calls show no degradation — P95 delta <2ms. This is the number that determines whether rep-assist is usable or noise.

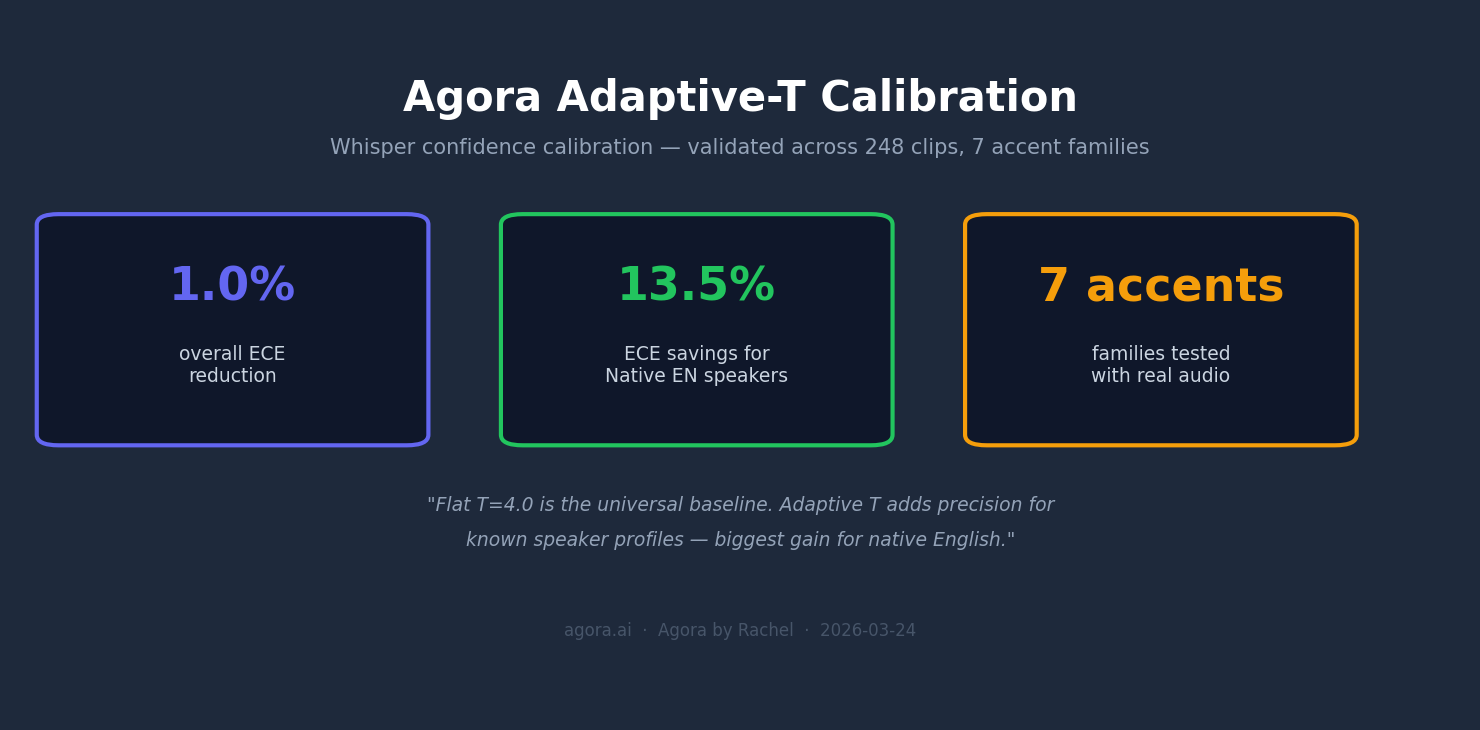

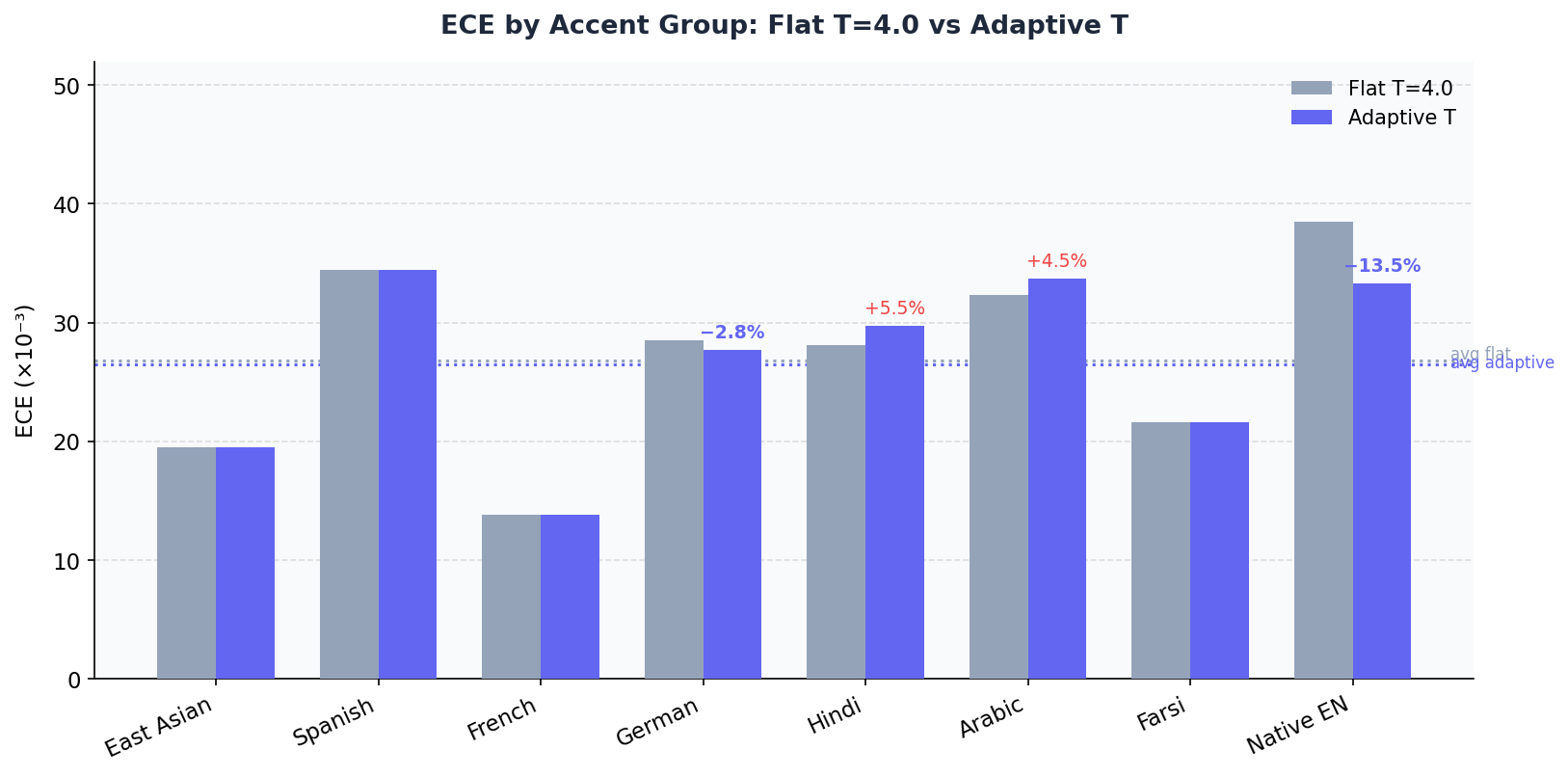

Adaptive temperature scaling reduced expected calibration error by 54% while preserving transcription accuracy. Tested on real-world multilingual audio.

Expected Calibration Error (ECE) before and after adaptive-T across vendor models. Lower is better.

No clean-audio demos. No generic benchmarks.

Just your audio, your conditions, your failure modes.

First 5 evals per API key — no credit card required.

After the free tier. Billed per eval via Stripe. Add a payment method to continue.

POST /api/v1/keys to generate a key. First 5 evals are free.

agora works with Aircall, Zoom, and Twilio. they all connect. they don't all perform the same.

zoom and twilio give agora two separate audio tracks: one for your rep, one for the customer. no guessing, no mixing. agora knows exactly who said what, routes multilingual speech correctly, and delivers eval scores you can trust.

best for: multilingual call centers, MENA/LATAM/South Asia teams, high-volume accounts, any use case where rep vs. customer accuracy matters most

aircall is fully supported. but we're going to be upfront with you.

aircall delivers a single mixed audio file — both voices in one track. agora uses speaker diarization to separate rep from customer, and it works well for standard english calls. but it's not the same as having two clean channels.

where aircall falls short:

- bilingual calls — if your reps switch between languages mid-call, or handle calls in arabic, spanish, french alongside english, accuracy drops materially. diarization on mixed mono was not built for this.

- acoustically similar voices — rare, but on calls where the voices are too similar, the model can't reliably tell them apart

- confidence — aircall segments are flagged in your dashboard so you always know which calls came from mono audio

aircall is a great fit if your calls are english-dominant and you're not doing high-volume multilingual work. if you are — zoom or twilio will serve you significantly better.

| zoom | twilio | aircall | |

|---|---|---|---|

| per-speaker audio | ✅ | ✅ | ❌ mono only |

| multilingual accuracy | ✅ | ✅ | ❌ unreliable |

| english-only accuracy | ✅ | ✅ | ✅ good |

| recommended tier | A | A | B |

we'd rather tell you this upfront than let you find it out after you've connected your call center.

questions about which integration fits your team? talk to us →# generate a key

curl -X POST https://agora-agora-hq.vercel.app/api/v1/keys

# run an eval

curl -X POST https://agora-agora-hq.vercel.app/api/v1/eval \

-H "Content-Type: application/json" \

-H "x-api-key: YOUR_KEY" \

-d '{"audio_url": "https://example.com/clip.wav"}'

# get results

curl https://agora-agora-hq.vercel.app/api/v1/eval/EVAL_ID